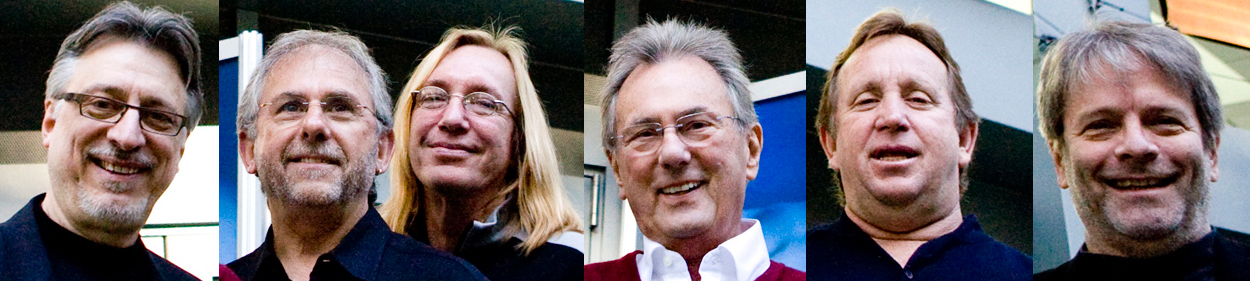

The METAlliance—Al Schmitt, Chuck Ainlay, Ed Cherney, Elliot Scheiner, Frank Filipetti and George Massenburg—has the dual goals of mentoring through “In Session” events, and conveying to audio professionals and semi-professionals our choices for the highest quality hardware and software by shining a light on products worthy of consideration through a certification process and product reviews in this column. Its mission is to promote the highest quality in the art and science of recording music.

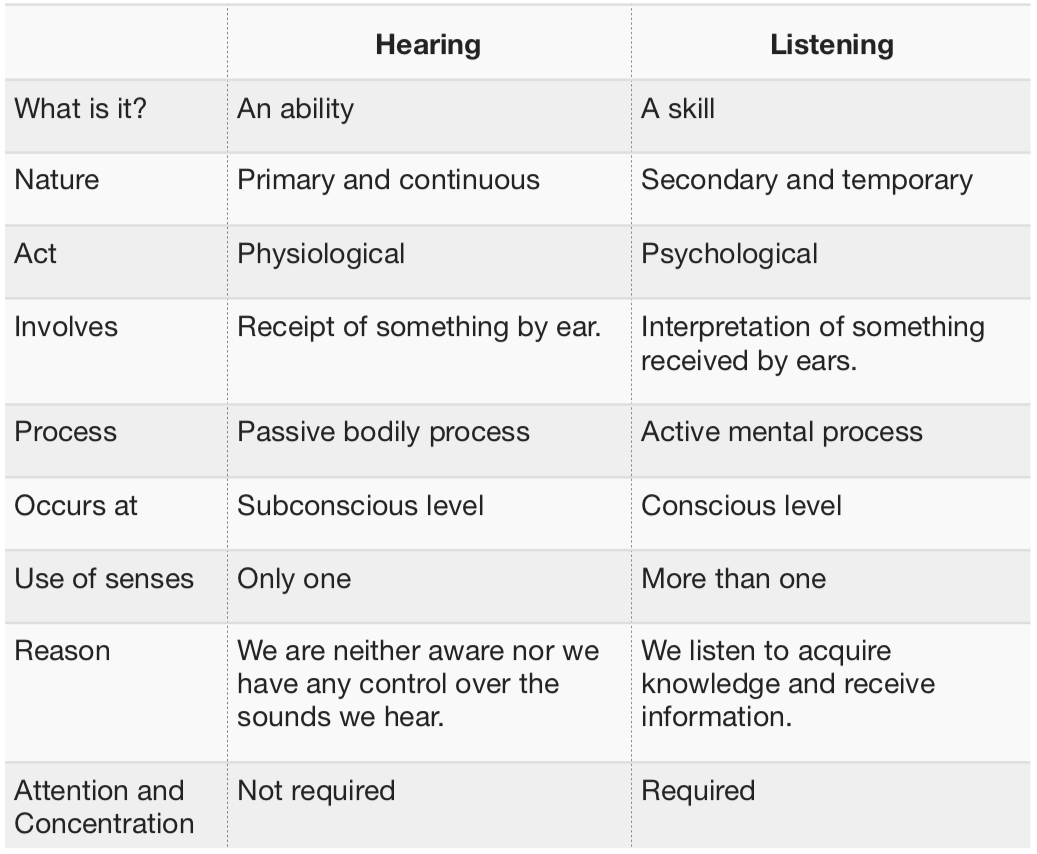

There are differences between hearing and listening worth touching on: one “hears” with one’s brains, not only with one’s ears. Hearing is the act of perceiving sound by the ear. Listening is a conscious mental process, as much about the brain as the ear, and it starts with establishing attention to a sound.

There are different kinds of listening—for instance, appreciative listening, which is exactly what the name implies: listening to good music, poetry or information. Then there’s comprehension listening. There’s content or informative listening. One often uses discriminative listening to identify differences between sounds. And there’s relationship listening, as when, for example, your significant other asks something important like, “What time are you going to be home from the session?” There’s also empathetic listening, employed to better understand feelings and emotions. And then there’s critical listening and evaluative listening.

On the other hand, there’re also listening modes to avoid, such as biased listening.

We can think of the process of listening as follows: The brain receives surprisingly small packets of data from the ear via the auditory vestibular nerve, and that signal is processed and evaluated. Not only are pitch and loudness features extracted, but also other critical attributes, such as temporal features (timing) and differential timing cues from each ear. We compare this experience with memories of prior experiences, sounds and feelings that resulted—and then we do something with that impression, even if only just associating a description in words with the experience and remembering it.

Related: The METAlliance Report: How to Choose the Right Microphone, by Frank Filipetti and George Massenburg, Nov. 26, 2018

Critical listening is work—it may be the hardest job you’ll do, challenging the quality of paying “attention” and the ability for us to concentrate. (We’re living at a point in time where concentration itself is a test.)

Let’s start by restating how important listening skills and tools are to our work in professional audio. Without them, we’re just guessing. To start with, what are you “hearing”? Start by identifying—and naming—what you’re listening for. Real truths lurk everywhere, waiting to be uncovered.

For the moment, let’s narrow it down to evaluating music and evaluating technology, although there are aspects shared between them. For either, you’ll need to have a well-understood, neutral listening environment; minimize the signal chain, evaluate and optimize each component in the chain; have the ability to measure and calibrate loudness (as measured in LUFS); be prepared to reduce distractions, interruptions and talking; and identify and eliminate prejudices and expectations (ignore costs).

For evaluating music, there are at least two basic ways to listen when working on music productions professionally. To achieve useable results, you’ll need to listen both as an engineer and as a producer. Both aspects of recorded music play big roles in how music is perceived and eventually accepted downstream. Deconstructing and evaluating recorded music can take several different tracks. You might listen from musical perspectives (performance, composition, historical features), or you might listen to better understand technical issues, features and problems. Most importantly, you’ll want to discriminate judgements between different areas.

Related: The METAlliance Report: JBL 7 Series Studio Monitors, by Chuck Ainlay, Oct. 20, 2018

Some examples: An otherwise virtuoso performance might be perceived to be “out of tune” or include suspect intonation. A singer might technically have suspect pitch (as observed on AutoTune, Melodyne or other pitch-correction processes), but that’s not the whole picture. Music has historically been performed in other “temperaments.” Temperament is a lot more fluid in an orchestra playing without a piano; generally, the whole orchestra will play with a modern pitch reference (e.g., A 440). World-class musicians will often have a good enough pitch sense that they will play notes very close to equal temperament, if not right on. But they don’t have to. And you’d want to be sensitive to this, listening to the performance and isolating your response to music technology. Also, sometimes in pop music, a singer is more telling a story, and that story might be best told while taking liberties with pitch.

So I’d suggest that we’re going to “listen” to music with at least two ears if not three: We’ll listen “technically,” we’ll listen with a “musical ear” (maybe listening for “groove”) and sometimes we’ll listen from our guts (think listening for “punch,” as in dance music). One is well-advised to be able to differentiate between one’s responses, and choose a course of action very carefully.

Comparative testing between technologies is also tricky. First, one should always “blindfold” the tests, becoming familiar with the various methodologies to do so—for instance, A-B, A-B-C-HR (or hidden reference) or A-B-X. The latter two are used to detect audible differences blindly, thereby removing personal bias or the placebo effect and, over multiple trials, estimating the probability that the tester was guessing. For more on various blind tests for audio, go to https://bit.ly/2RMiEP1.

Related: The METAlliance Report: Desert Island Microphones, by Frank Filipetti, Sep. 24, 2018

I typically use three flavors of testing. A-B tests only go so far, but are useful for a quick “ear reset.” For instance, when mixing, I’ll EQ something, say a vocal or snare drum. I’ll save that setting, but often come back and make changes to it, saving the new setting. Then, blinding the test (not looking at the EQ after setting the trackball to the A/B button on the EQ), I’ll randomize the A/B and listen to whether I really have a preference for one or the other.

Also, using straight-ahead A-B’ing (as we practice in McGill’s Sound Recording Master’s Advanced Technical Ear Training class), we’ll give students a track or a mix (compared to a reference mix) and ask them to, by ear, duplicate the EQ, or the dynamics, or the reverb or the whole mix!

For audio technology development—for instance, a comparison between two Equalizers (boxes or plugins)—I’ll turn to more sophisticated, sometimes multiple methods. I’ll start with A-B-X, giving two known samples (Sample A, the first reference, and Sample B, the second reference) followed by one unknown Sample X that is randomly selected from either A or B. I’ll start with pure tech measurements to establish equivalent performance, ensuring an equal playing field (which is often made difficult because of different manufacturers’ approaches to calibration) and set up exactly equivalent signal chains. So, with A-B-X listening, I’ll randomize the trials, most often having an assistant carefully write down the random assignments. If need be, I’ll change to an approach where I’ll listen to a sequence such as A-A-B-A, where I’ll try to pick out the “odd man out.”

Want more stories like this? Subscribe to our newsletter and get it delivered right to your inbox.

Most importantly, where features are subtle, I’ll widen the listening window and take time to relax to prepare for critical listening. Mindfulness meditation is a technique to practice the act of being fully present in each moment without judgment. Thus, often it’s important to listen to larger sample sizes—minutes instead of seconds—and to repeat these tests until you’ve got a clear picture of what you’re listening to.

METAlliance • www.metalliance.com